Data Governance Platforms

Given the importance of data governance it is hardly surprising that there are technologies geared up to help with your efforts. They come in various shapes and sizes with accompanying price tags. In this post we’ll take a look at their general benefits and moving parts to help you decide how they might feature in your implementation.

Why Consider a Data Governance Platform?

Depending on the size of your data governance program, you may find significant benefit from the use of a dedicated data governance platform. Some high-level reasons for implementing a platform to assist with your data governance are outlined below.

Centralised Data Governance Efforts

The platform will provide functionality such as portals, approval workflows and repositories of information around data governance all from one location. This allows for better communication and collaboration across the program and organisational transparency around roles and responsibilities. Governance silos are avoided and universal approaches are more readily available.

Increased Productivity of Regular Tasks

Data governance platforms have various tools to assist with taking the ‘spade work’ out of the program implementation. These are becoming more effective with the addition of AI elements to products.

Complementary Data Management Tools

Your data management efforts will benefit from the various data cataloguing, quality and lineage tools that these platforms provide. In fact your data management team may well have already invested in these tools for just that purpose. Providing these insights in one platform will significantly increase productivity and ‘quality of life’ of data management teams.

When to Implement a Data Governance Platform

The main purposes of these platforms are to ease the implementation of the data governance program and assist with data management aspects across the estate. Too often however companies will rush out and purchase a platform way too early within the program. The product sits there unused, with a relatively noticeable price tag attached. There is little or no point from a data governance point of view in having a platform without having your strategy, principles, policies and at least some of your processes well defined. To state what is often missed here, wait until you really have things to govern and a need for this platform.

The main purposes of these platforms are to ease the implementation of the data governance program and assist with data management aspects across the estate. Too often however companies will rush out and purchase a platform way too early within the program. The product sits there unused, with a relatively noticeable price tag attached. There is little or no point from a data governance point of view in having a platform without having your strategy, principles, policies and at least some of your processes well defined. To state what is often missed here, wait until you really have things to govern and a need for this platform.

In the meantime gain an understanding of the return on investment of the data governance program through its agreed budget and business capability improvement. Look at how your data governance processes would benefit from the functionality on offer and go through a thorough product selection process. Any vendor selection process can take some time, with their sales teams ordinarily prioritising on reeling in potentially larger prospects. You may also find platform offerings evolving during the process as new tooling and integrations are added.

Functionality and Value-Add

With the above points in mind, there are various areas of functionality that really add to the value of the platform implementation.

Data Quality

This subject area has a well established selection of products that identify, process and improve the quality of data. Some vendors are stronger than others in their offerings here, but the overall functionality they offer can be summed up as:

- Data Profiling for identification of problem areas for data quality efforts

- Data Rectification for assisting with upstream data quality correction, through either direct processing or via data validity reports and exports

- Data Observability to help with operational elements of data quality and related challenges

Where a vendor has an accompanying ETL or data processing toolset these are generally integrated into the above areas. Data observability for example will benefit from greater lineage detail for products that provide this natively to the platform.

Not all vendors leverage the underlying available data processing compute resources for data quality tasks, often offloading to their bespoke data management platform infrastructure. This can prove costly and time consuming when compared to the existing resources such as big data platforms. This aspect of efficient data quality processing should be carefully considered when selecting a suitable platform.

Data Security and Privacy

This critical subject in any data governance program has many touch points and facets to consider. There are numerous ways that your chosen platform can assist with this, with varying functionality in this area across vendors.

Some key items are listed below:

- Definition of roles and assigned personnel

- Identification and classification of data assets for security and privacy considerations

- Auditing of data access, generally from digesting various access logs from data management products and document management systems

Data Transparency

Awareness of, and access to, data products and assets are key considerations for a successful data governance initiative. Data governance platforms leverage various repositories and portals to assist in data awareness and understanding across the organisation.

Awareness of, and access to, data products and assets are key considerations for a successful data governance initiative. Data governance platforms leverage various repositories and portals to assist in data awareness and understanding across the organisation.

Here are some of the approaches taken:

- Data Catalogues for definitions of data assets and products, business glossaries and approval taxonomies

- Metadata capture of existing data assets through trawlers and scanners that either pull or push metadata from known sources

- Lineage of data assets and products determined from data processing and management operations

- A ‘Data Marketplace’ concept for certifying, publishing and promoting data assets across the organisation

Operational Efficiency

The growing demands on data management teams to help derive business value from data place significant challenges in a growing data landscape. To remain competitive, associated costs and productivity need to be carefully managed. There are a number of benefits that can be gained from a well implemented and populated data governance platform, such as:

- Identification of duplicated processes and data assets

- Monitoring of processing performance and associated resource usage

- Visibility of processes and associated outputs to help derive associated business value

Key Components

The following components are essential to any data governance platform.

Metadata Catalogue

This is the main repository for metadata and definitions of elements of data management and governance. Centralising this information for transparent access is hugely beneficial in any but the most simplistic of programs.

This is the main repository for metadata and definitions of elements of data management and governance. Centralising this information for transparent access is hugely beneficial in any but the most simplistic of programs.

Most products incorporate the three areas of metadata generally included within data governance, being:

- Operational metadata, providing data provenance, processing and data observability information

- Generally more detailed when coupled with integrated data processing platforms from the same vendor

- Business metadata, such as glossaries, definitions of metrics and other standards

- Technical metadata, describing data assets and their classifications, data sources, transformation mappings and similar structured and semi-structured data definitions

This metadata will be the lifeblood of much of the program information, and can play a significant part in data management simplification through metadata-driven processes. This component should be scrutinised regarding content capability, ease of use and metadata discovery.

Metadata Discovery

Populating the metadata repository in an efficient manner will provide much needed details on the various data assets, sources and related processing that is embedded within various data management products across the organisation.

Population is initiated through the following methods:

- User-initiated discovery requests, targeting a specific source of metadata

- Automated scans via metadata crawler services to collate metadata from various sources

Connectivity to metadata sources varies although most vendors capture the most common cases. The ease with which metadata can be onboarded should be a key consideration with respect to your current and future data estate.

Collaboration Portal

Many programs will use technology such as SharePoint for document collaboration across the program. Some products do have their own portal for hosting the various documents on policies, processes, charters, role definitions and other items of interest to organisational data governance. Either way, a portal for all things data governance is strongly recommended for efficient communication and knowledge sharing.

Extended Components

In addition to the above, many vendors offer additional elements within their data governance suite of products. Depending on your particular priorities these may make the list of ‘must haves’ in your considerations.

Data Access Auditing Services

Data access considerations require no real introduction and are front and centre of any security aware data management team. Many organisations are adopting what is often termed a ‘zero-trust’ approach to data access. This involves closely monitoring privileges to all data sources, and ensuring that data access is tracked through various auditing components. This is particularly challenging given the multitude of data stores that exist in even a modest sized organisation. Document stores are one area that are often overlooked, with the auditing of their access being difficult to achieve without close integration into vendor document store products.

AI Model Management

With increasing use of AI models in companies of all sizes comes associated management challenges. In particular, in light of recent regulatory expectations around suitability of AI and ML outputs for use in business processes, performance of these models is particularly important. Model management tools address the following:

- Understanding model trends and accuracies over time

- Determining computational performance for cost-effective computational resource usage

- Addressing input dataset and model parameter traceability, for reasons such as compliance, privacy and ethics

- Avoidance of bias such as towards demographic groups, particularly regarding protected characteristics such as race, age, disability and sexual orientation, through understanding of model parameter data distributions

Data Quality Engine

Given the generally high priority of data quality within a data governance program, often with considerable value-add attached, this component for many will be promoted to a key constituent. As mentioned above, functionality and implementation varies across vendors and as such should be given careful consideration.

AI Assistance

This can provide considerable productivity benefits for data catalogue-related activities. Categorising and labelling various assets and their attributes according to predefined or newly discovered classifications of data is accomplished through data profiling of samples and applying various matching algorithms. Suggestions on data quality rules based on classifications and attribute types are also easily generated. As you can appreciate, automating these repetitive and time consuming tasks frees up data professionals to apply themselves elsewhere in more specialised activities.

Generative AI

Generative AI tools allow greater assistance beyond the automating of low hanging tasks. Through models focused on the data estate, profiles of organisational data and known applications and outputs, understanding large bodies of information is made considerably simpler. Data discovery challenges such as finding good candidate attributes for customer loyalty indicators are made simpler.

This ability to reason around and through your data improves transparency and productivity. There is less need to to drill into great detail to understand what’s out there. Marketed as the ‘next generation of data governance tools’, these data context-aware assistants are driven by models trained using metadata and data profiling. As they provide more insight and improve on data-related understanding, so their models benefit. More focused and relevant model input further improves accuracy and applicability in a self-learning fashion.

Conclusion

Data governance platforms may prove essential for some organisations in their quest for ‘data governance law and order’. Don’t assume however that you can throw technology at the challenge and sit back.

Without all the other elements we’ve been discussing such as business capability understanding, stakeholder buy-in, strategy, policies and well structured teams the platform will at best be something of a white elephant. The technology decisions should be amongst the last elements to put in place before you grow the program. Only with all other items defined will you truly know what you need from the platform. At this point you can decide on an implementation confident that it will help drive your business capability improvement initiative that is data governance.

Implementing Your Data Governance Solutions

If you’d like to discuss any aspects of your data governance program, whether defining goals, deploying solutions or anywhere in between, please don’t hesitate to get in touch. Our data governance service is a flexible, coworking approach that provides assistance wherever you are on your journey.

Data Governance Frameworks

The implementation of a data governance program involves a number of capabilities and disciplines. We have spoken about key objectives and some common challenges. We’ve also discussed approaches to progressing with the work. Data governance is certainly not new, and as such there are considerable bodies of work that will help with your efforts. For something as involved as data governance, even in smaller organisations, we can look to frameworks to guide our efforts.

In this post we’ll summarise the most common data governance frameworks to help you determine their applicability for your initiative. Their content should be viewed as elements for probable inclusion, with varying priorities across different organisations and business sectors. They may help you determine team structures and will definitely form various implementation areas for backlogs and roadmaps. As we talked about previously, look to roll these out over time based on understanding of requirements, stakeholder priorities and ability to execute.

Summary of Popular Data Governance Frameworks

Provided here is a short summary of each of the featured frameworks and a list of their components to assist you with deciding on which you may want to consider. Each brings their own unique take on the subject. They offer valuable insight into what lies ahead and will help considerably with structuring your approach.

DAMA DMBOK

The DAMA Data Management Body of Knowledge (DMBOK) is available for purchase from DAMA International. As you would expect from DAMA, it is a well structured and detailed resource that is well worth the admission price. At nearly 600 pages, it is an all-inclusive guide to data management. It comprises a data management framework composed of the items below:

- Data Governance

- Data Architecture

- Data Modeling and Design

- Data Storage and Operations

- Data Security

- Data Integration and Interoperability

- Document and Content Management

- Reference and Master Data

- Data Warehousing and Business Intelligence

- Metadata Management

- Data Quality Management

- Big Data and Data Science

© 2017 DAMA International

Data Governance is seen to be the central theme that touches all elements of data management.

The data governance program is further shown as a set of processes and disciplines that surround and influence data management foundational activities and lifecycle management.

These areas will undoubtedly feature to a greater or lesser extent within your own data governance efforts. Each organisation will seek to tailor their efforts according to business need and gaps.

The section on data governance includes steps for implementation as below:

- Assess their current data management maturity

- Identify gaps and areas for improvement

- Develop a roadmap for implementation

- Assign roles and responsibilities

- Provide training and support

- Monitor and measure progress

© 2017 DAMA International

This provides a robust approach to defining and assessing progress of your data governance program against a proven framework. It is a popular choice, being applicable to organisations of varying size. You can find the DAMA DMBOK on Amazon (other booksites are available) or the DAMA International store at https://technicspub.com/dmbok2/.

DAMA also provide images of each of the areas of the framework from the DMBOK for download, available at https://www.dama.org/cpages/dmbok-2-image-download. An example is given below for the data architecture aspects.

The Data Governance Institute Framework

This body exists to provide guidance and expertise on implementing data governance. They have been around for 20 years and offer a huge amount of incredibly insightful material and practical advice around how to succeed in data governance. Their data governance framework moves the focus away from familiar aspects of data management and towards more governance “rules of engagement”. The majority of their articles are free, with a small number of more specialised articles requiring a modest subscription to access. The various components are listed below:

- Mission and Value

- Beneficiaries of Data Governance

- Data Products

- Controls

- Accountabilities

- Decision Rights

- Policy and Rules

- Data Governance Processes, Tools, and Communication

- DG Work Program

- Participants

This is arranged into the framework as shown below.

There are additional articles on aspects such as funding models for data governance, how focus areas may differ in programs, governance models and more. In essence just about every aspect of the initiative is considered. Even if you decide not to use this framework their articles are definitely worth a visit and not at all heavy going.

IBM Data Governance Council Maturity Model

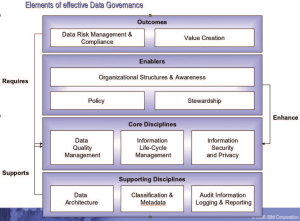

This free resource is focused solely on data governance as a discipline and how to grow this within the organisation. It also uses the concept of a ‘Maturity Model’ to assess capability and track progress. Although only 16 pages it still manages to provide a valuable structure upon which to base your initiative. The concept of data governance is divided into eleven framework elements or ‘domains’, as listed below:

- Organisational Structures and Awareness

- Stewardship

- Policy

- Value Creation

- Data Risk Management and Compliance

- Information Security and Privacy

- Data Architecture

- Data Quality Management

- Classification and Metadata

- Information Lifecycle Management

- Audit Information, Logging and Reporting

Some of these are similar to those within the DAMA DMBOK, although concepts outside data management are also included. These are grouped into functions of Outcomes, Enablers, Core Disciplines and Supporting Disciplines as shown below.

This provides an intuitive view of how elements interact and will assist with planning and prioritising work. When drilling down into the various areas of the model you will however need to consult other more specialised resources to determine implementation details.

CMMI Data Management Maturity Model (DMM) – Discontinued

This is no longer in service, having been discontinued recently. If you are using CMMI approaches to oganisation management you may find aspects that can be applied to data management, however there is no longer a specific model provided.

Implementing a Data Governance Framework

In the interests of reducing lead-time and delivering value early and often, as previously mentioned in Getting Started with Data Governance an Agile delivery method works well. To recap on the points we previously made, focusing on high-priority items that have a low risk of not being delivered with your work iterations will allow progress in the areas that matter most. If an area is required urgently but is poorly defined, focus on bringing the definition of requirements to a level that allows work to move forward as soon as possible. Once items start being delivered, momentum and enthusiasm will build, helping to drive further value.

In the interests of reducing lead-time and delivering value early and often, as previously mentioned in Getting Started with Data Governance an Agile delivery method works well. To recap on the points we previously made, focusing on high-priority items that have a low risk of not being delivered with your work iterations will allow progress in the areas that matter most. If an area is required urgently but is poorly defined, focus on bringing the definition of requirements to a level that allows work to move forward as soon as possible. Once items start being delivered, momentum and enthusiasm will build, helping to drive further value.

Applying Data Governance in Small to Medium Enterprises (SMEs)

The need for some degree of data governance will be required in organisations wherever data exists, regardless of size. For an organisation owning and processing only a very small amount of ‘low-risk’ data, a smaller program may suffice. A very light touch program prioritising on data security and operations for example may address most concerns. Obviously larger organisations with larger data estates will require more aspects to be covered in greater depth and breadth. It may prove unfeasible to try and cover all aspects of a framework within an SME, however all aspects should be discussed and prioritised accordingly. A reduced framework for initial delivery can then be defined and added to as needed. Items from the DAMA DMBOK worth considering as a first pass for SME data governance frameworks might include:

- Data Governance

- Data Architecture

- Data Storage and Operations

- Data Security

- Data Warehousing and Business Intelligence

- Data Quality Management

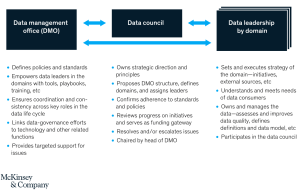

You will still want to consider the benefits of establishing a Data Management Office (DMO) and identifying data domains and their respective leaders/owners. The remit of the DMO and the size and number of the data domains will be scaled down but still provide essential functions.

A great reference for considering data governance frameworks for SMEs can be found at https://cornerstone.lib.mnsu.edu/cgi/viewcontent.cgi?article=2125&context=etds, in the form of a thesis submitted for an MSc in Data Science. It also provides a good overview of recent data legislation to be aware of.

Further Reading

The Data Governance Institute has a great round-up of books on data governance.

https://datagovernance.com/bookstore/

In particular “

The DAMA DMBOK website also includes a list of books referenced in the various chapters of the DMBOK. Having them available by chapter makes for another great (although large) browsable list of possible reading material.

https://www.dama.org/cpages/books-referenced-in-dama-dmbok

Summing Up

We hope you found the above overview of data governance frameworks helpful for initiating or progressing your program. Having talked about people and process aspects, we’ll be looking next at data governance technology considerations.

Defining Your Data Governance Initiative

If you’d like guidance and help on any areas of data governance please don’t hesitate to get in touch. Our data governance service is a flexible, coworking approach that provides assistance wherever you are on your journey.

Getting Started with Data Governance

A data governance initiative will involve embedding various practices, processes and standards in a lot of places. The scope of this is generally proportional to the size of your organisation. You will be more successful in scaling this work if you move general activities to departmental or team level resources. This allows you to establish data owners who are close to the data. This will be more productive and risk averse than a central team who are less familiar with the data. With that in mind, let’s look at how we get your data governance started.

Stakeholders

As with any initiative across an organisation, having strong business buy-in is essential for things to gain and maintain momentum. Without this, your data governance program will likely lack acceptance and struggle to add the necessary value expected.

A good start is to identify initial resources and key personnel who will drive progress and set up the communications channels between these parties. These stakeholders must have a vested interest in the overall success of the program. Start with those who are already involved in data architecture, legal aspects of data ownership, and data infrastructure management. They may well already be making noises about what they’d like to see happening which is great. Then, look to the business for those who will really back the program. Those who really appreciate the potential for improving business capabilities through better data governance are ideal. After all business capability improvement is at the heart of every well considered data governance program. Aim for at least as many business people as you have from elsewhere within the organisation as these will help champion the program with the wider audience.

With communications approaches and stakeholders identified you can start working towards formulating the content of the data governance program.

Data Domains

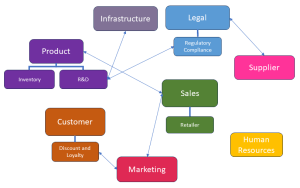

Your organisational data can be shown to exist within conceptual groupings referred to as ‘data domains’. Your organisation may have already established what these are if you have an enterprise data model or a mature data architecture or modelling practice. They will generally align well to departmental functions, with possible additional core domains such as Customer or Product that may span multiple departments. This should help you think about your structure for delegating and dividing the work across teams. A ‘data domain model’ is a great tool to help you understand how your data can be grouped for governance ownership considerations. Even if it is very high level and boundaries are rather fuzzy this view of your ‘problem space’ will help with the ‘divide and conquer’ mindset.

Your organisational data can be shown to exist within conceptual groupings referred to as ‘data domains’. Your organisation may have already established what these are if you have an enterprise data model or a mature data architecture or modelling practice. They will generally align well to departmental functions, with possible additional core domains such as Customer or Product that may span multiple departments. This should help you think about your structure for delegating and dividing the work across teams. A ‘data domain model’ is a great tool to help you understand how your data can be grouped for governance ownership considerations. Even if it is very high level and boundaries are rather fuzzy this view of your ‘problem space’ will help with the ‘divide and conquer’ mindset.

Being able to conceptualise your data estate into say 10-20 such groups, some of which may underlie a number of others, is invaluable.

You can arrive at a first draft data domain model through one or two workshops with relevant business owners. One technique to help arrive at this is using ‘functional decomposition’ of your organisation to identify business capabilities. These may align well to departmental views depending on the nature of your data. It is advisable is to keep your model high-level, avoiding it morphing into a data integration architecture diagram or similar. You can include ‘subdomains’ to help with the next level of detail, but lower levels will generally not prove beneficial for this exercise. Even in a draft form it will provide a much appreciated guide to understanding your data governance landscape.

Teams and Oversight

Establishing a team structure that works best for the program is critical to success and productivity. Each organisation will differ in this regard, although there are some common structures that make sense to consider. The following organisational elements are recommended from McKinsey & Company.

©2020 McKinsey & Company

Data Management Office

Although data ownership can be delegated and transferred to domain-based teams, the management of that data is a discipline that will span the organisation. The data management office exists to ensure that all aspects of ownership and processing of data follow established best practices. This may currently informally exist as a group of data architects and data strategists, infrastructure and operations team leads and similar managers.

Data Council

A central guidance authority that oversees the program will generally be required in any initiative on data governance. This data council will sit across all teams, providing consistency and coordination. It also addresses high-level aspects of the program, such as funding and issue escalation. It may include key stakeholders from the business as well as heads of data such as the Chief Data Officer (CDO) or Chief Information Officer (CIO) and related legal officers. The Chair of the council may be the head of the data management office, although having a senior business figure as chair will work better if business backing needs to be improved.

Domain Teams

How you structure your delivery teams is going to depend on various factors, including organisational culture and existing structures. You may decide on some central teams that provide supporting roles, and then further teams that address data domains. You may instead structure your teams around the different key objectives we talked about in an earlier post Data Governance Objectives and Challenges, such as legal, security etc. although this can detract from a business value driven program. With data domain teams established that are supported by specialist teams you are able to focus on meeting governance requirements that align to business data processes.

Strategy

Principles

Before you can really start to determine what to include, you’ll need a set of principles on which to base your program. Just saying ‘doing data governance’ isn’t going to get you far when it comes to formulating priorities and content later on. You should be looking to add new business capabilities through the actions of the program, thereby ensuring business value. A great way to determine a strategy, as suggested by John Ladley in his book on Data Governance is to list the principles that are basically answering the question ‘What are we trying to achieve?’ in applying data governance to your organisation. In our previous post we talked about ‘Key Data Governance Objectives’ and these should help guide you here. These principles may be relatively common ones, such as ‘Data/Information Quality’ and ‘Risk Management’, or they may be more aligned with a particular aspect of the organisation that needs addressing. They should serve to guide thinking and help justify courses of action.

Policies

From your handful of principles you can then look as determining policies to essentially reinforce and realise these. These policies then form a backbone for commonality across business areas and ensure consistency and quality throughout the program with minimal concern for deviation. As with many aspects of the program, these will evolve over time, but having a good set of core policies in place helps add structure and guidance to initial efforts.

Requirements Gathering

There are some great reference materials on what constitutes data governance and how to go about formulating a program, as we discuss in a later post on Data Governance Frameworks. Once you understand the key constituents, decide with your stakeholders on the relative priorities.

There are some great reference materials on what constitutes data governance and how to go about formulating a program, as we discuss in a later post on Data Governance Frameworks. Once you understand the key constituents, decide with your stakeholders on the relative priorities.

Some organisations may try and determine an all encompassing plan from day one. As well as being a truly overwhelming task this ‘all requirements upfront’ approach will involve significant rework and ‘planning churn’. This may be familiar to those taking a ‘Waterfall’ approach to implementation. Stakeholders may understandably be less than happy with the increase lead time and reduced productivity and value resulting from this.

Agile Requirements Management

Those familiar with Agile software development understand how this approach tends to cut waste and keep requirements relevant. Let’s take a look the benefits of an Agile approach to data governance requirements management.

Why Agile?

One key advantage to an Agile approach is that on each cycle of work the priorities and risks to successful implementation are revisited. The high priority work with the best chance of getting implemented during that cycle is given preference. Those elements that have requirements that are less well defined or understood are refined based on priority. Details are gathered to understand enough to reach a level where the risk to implementation is acceptably low. By defining the details only when the items are confirmed as needed we avoid thrashing out details too early. This avoids those items that may turn out to not actually be required or have changed requirements. Waste and lead time are reduced and items are prioritised and delivered as required. This approach has real benefits with regard to progress, maintaining momentum and generally delivering value early and as needed.

The ‘Agile mindset’ may however be something that exists only within development teams within the organisation if at all. As such if this approach is to be employed there may be a need to ‘win over’ or at least explain the benefits of this way of working.

Determining Your Agile ‘Epics’

If you do decide to work in an Agile fashion, when starting your backlog of Epics, bear in mind that despite the name these Epics shouldn’t be huge. Taking each of the objectives in our previous article Data Governance Objectives and Challenges as an Epic would probably be too broad. You could however arrive at a number of Epics within each of these areas, also having ones that span these objectives. Some of your Epics may be foundational items that are required before you can build further, such as establishing a communication approach for the program, or determining a high-level data domain model.

If you do decide to work in an Agile fashion, when starting your backlog of Epics, bear in mind that despite the name these Epics shouldn’t be huge. Taking each of the objectives in our previous article Data Governance Objectives and Challenges as an Epic would probably be too broad. You could however arrive at a number of Epics within each of these areas, also having ones that span these objectives. Some of your Epics may be foundational items that are required before you can build further, such as establishing a communication approach for the program, or determining a high-level data domain model.

It is relatively easy to determine initial high level ‘Epics’ on the key areas of the program. These are then drilled into to provide a number of ‘Features’ that will form the functional elements of the program. The backlog of these items will evolve and expand over the course of the program. Changes in regulation, security, and internal demands for data will direct the workload over time. This is the nature of the program of work and working Agile will complement this shifting nature. Once you have agreed some of the details for these backlog items you will be able to get your data governance initiative started.

Conclusion

Embarking on your data governance journey doesn’t have to be a planning behemoth or feel like herding cats. With a small group of stakeholders and understanding of how your data estate needs to relate to the business you can start the ball rolling. Agile practices will help to provide value early and prove to the organisation that data governance works at all levels. As we continue this series, we’ll take a brief look at some of the frameworks and technologies that can help you achieve your goals.

Moving You Forward with Data Governance

If you’d like to understand more about getting started with data governance please don’t hesitate to get in touch. Our data governance service is a flexible, coworking approach that provides assistance wherever you are on your journey.

Data Governance Objectives and Challenges

In our previous post What is Data Governance? we discussed the basics of data governance and how it has progressed from its original remit of ‘Compliance and Security’ to a more rounded, user-centric definition. Getting data governance moving in the right direction in any organisation is important more so now than ever. In this post we’ll take a look at some of the key objectives and challenges involved.

Key Data Governance Objectives

Business Capability Improvement

If there is one phrase that sums up the overall reason for embracing data governance, this is probably it. If you’re not improving the business’s ability to do what is beneficial financially, either directly or indirectly, then people will question the whole point of the program. So with that in mind, what are we setting out to do and why?

There are a number of key areas that define what we are aiming to achieve with our data governance initiative. These can be formalised into a program strategy statement, as we’ll discuss later in the series. A suggestion on how to group your efforts is given below.

Compliance

Data regulation is gathering pace amidst criticism for having been lacking in the past. Organisations will need to be conversant with more laws and consequences of drifting from their responsibilities in these regards. Adhering to relevant laws and regulations regarding data privacy and protection is critical. The need to involve legally qualified personnel in this area is not to be underestimated. Greater investment in this area is required in certain industries such as Health Care and Finance.

Data Security and Sensitivity

The need to protect data from unauthorised access or breaches is a point that needs no preamble. What is often missed however is not just the threat from external parties but also that internally. There is a need to understand how internal use and misuse of data can have an impact. The identification and correct handling, retention and disposal of sensitive data is a particularly challenging issue.

Data Quality Improvement

Ensuring that data is accurate, timely, consistent, reliable and complete are just some of the drivers in establishing data trust. The old adage ‘garbage In – garbage out’ is never truer than in this area. Making data quality a key objective will add considerable value to your efforts in data governance.

Operational Efficiency

Streamlining data management processes will improve efficiency and reduce costs. Various aspects of data architecture will determine the degree to which these efficiencies will be achievable. Identification of unwanted duplication of data, redundant or legacy processing and other undesirable elements of the data management activities within the organisation are essential. Data Observability also plays a part here, allowing for identification of issues with data processing. This allows proactive management of the data workloads and corrective actions when required.

Decision-Making Support

Data analytics and business intelligence activities all benefit from the efforts made in data governance. Providing data consumers with accessible, trustworthy data is a key data governance objective. This is essential for supporting strategic business decisions.

Data Governance Challenges to Success

There are a number of key challenges generally encountered when attempting to implement a robust data governance program. These vary in degree based on factors such as industry, organisation size, data manageability and the general opinion towards such an undertaking. Each organisation’s efforts will meet with their own unique bumps in the road of course, however being mindful of common issues encountered helps navigate a path to success.

Cultural Resistance

The nature of data governance is an organisation-wide mindset to data in general, and will involve establishing processes and assigning responsibilities. If there is no clear understanding of the benefits of the program, it risks being perceived as ‘just more hassle’ or ‘more forms to fill’. These negative connotations attached to the program from the start can be hard to remove. Make sure to send a clear message about the objectives and real benefits to data consumers that will result before moving to implement any changes.

The nature of data governance is an organisation-wide mindset to data in general, and will involve establishing processes and assigning responsibilities. If there is no clear understanding of the benefits of the program, it risks being perceived as ‘just more hassle’ or ‘more forms to fill’. These negative connotations attached to the program from the start can be hard to remove. Make sure to send a clear message about the objectives and real benefits to data consumers that will result before moving to implement any changes.

Perceived Value

Given the varying degree of appreciation for a data governance program that will inevitably exist, there will be accompanying differences in opinion on its relevance. Look to appoint stakeholders who understand the real benefits of the program. These help champion the message within the organisation that data governance is not some ‘necessary evil’ or ‘something that just has to be done’. They can promote the benefits to all, either directly or indirectly that delivering on the objectives will bring. If a financial perspective on the benefits is required, this can be relatively easily achieved. Simple discussions around productivity aspects and risk mitigation will yield an appreciation of monetary value. It is advisable to assess early on the value the program will provide in empowering your data professionals. This ‘data value-add’ is often overlooked in favour of reducing risk from non-compliance and security concerns. All these benefits should be included when promoting the endeavour.

Inadequate Resourcing

Another key issue often faced when rolling out elements of data governance is the lack of understanding of the resourcing required. Often data ownership and stewardship duties are dropped unceremoniously into the laps of already overloaded or stretched managerial staff who are given few pointers on what their new additional role as ‘data owner’ or ‘data custodian’ or ‘data access approver’ really entails. The requisite amount of resource needs to be available if the role is to be done correctly. Failure to address this results in inevitable pushback, disengagement or mistakes due to overloading. Make allowances in workloads for induction, training, and execution of these new responsibilities.

Another key issue often faced when rolling out elements of data governance is the lack of understanding of the resourcing required. Often data ownership and stewardship duties are dropped unceremoniously into the laps of already overloaded or stretched managerial staff who are given few pointers on what their new additional role as ‘data owner’ or ‘data custodian’ or ‘data access approver’ really entails. The requisite amount of resource needs to be available if the role is to be done correctly. Failure to address this results in inevitable pushback, disengagement or mistakes due to overloading. Make allowances in workloads for induction, training, and execution of these new responsibilities.

Communication

A data governance program involves a lot of work which can be shared over a wide pool of resources. It is paramount to ensure clear and succinct communication streams are established as more people become involved. Large numbers of meetings discussing standards and policies with a lot of silent attendees is not the best use of time. A portal for sharing knowledge, together with chat platform channels is a much more efficient and flexible means of keeping everyone in the loop for developments and announcements. This frees people up to fit in their responsibilities around other work.

A data governance program involves a lot of work which can be shared over a wide pool of resources. It is paramount to ensure clear and succinct communication streams are established as more people become involved. Large numbers of meetings discussing standards and policies with a lot of silent attendees is not the best use of time. A portal for sharing knowledge, together with chat platform channels is a much more efficient and flexible means of keeping everyone in the loop for developments and announcements. This frees people up to fit in their responsibilities around other work.

Documentation

There will undoubtedly be terms and concepts that some of those involved will not be familiar with. The usual rules apply about introducing these concepts and having glossaries and reference material to ease any learning curve. Establish a portal as the official source of information at the very start of the program. The structure of the documentation store will probably need to flex as things progress. Put in place a few foundational elements together with an approval process for content publishing. This will help ensure that things maintain an agreed structure and quality and tone of content. Encourage submissions from those within the program who will have skills and knowledge that will assist with these. Do not reinvent the wheel here however. There are a huge number of resources already out there to help with data governance in the form of frameworks, which we discuss in our series post Data Governance Frameworks.

Perceived Scale

Often the perceived scale of the program can introduce issues with initiating and motivating those involved. It can appear to be a monumental undertaking ahead. For those directly tasked with getting things moving it is understandably a daunting prospect. Look at the work as a collection of high and lower priority tasks that can be spread over time and across teams. This is obviously preferable to a Sisyphean task for a central team. Resource your efforts accordingly, possibly looking for bringing in external subject matter experts where required. If you have managed to arrive at some ‘ballpark’ figure for the tangible benefits of the program this will help in determining your budget over time and allow you to invest accordingly.

Often the perceived scale of the program can introduce issues with initiating and motivating those involved. It can appear to be a monumental undertaking ahead. For those directly tasked with getting things moving it is understandably a daunting prospect. Look at the work as a collection of high and lower priority tasks that can be spread over time and across teams. This is obviously preferable to a Sisyphean task for a central team. Resource your efforts accordingly, possibly looking for bringing in external subject matter experts where required. If you have managed to arrive at some ‘ballpark’ figure for the tangible benefits of the program this will help in determining your budget over time and allow you to invest accordingly.

Data Architectures

Depending on the maturity of your data architecture capabilities, you may face additional challenges from a data management perspective. You may be fortunate enough to have a well planned, future-proof and data consumer-focused landscape. It may provide good transparency and purpose to your various data assets. You may have arrived at a more ‘accidental architecture’. This will not be as well structured but is still functional and serves a need. Perhaps you are undergoing a ‘Digital Transformation’ initiative and things are very much in flux in this area.

Depending on the maturity of your data architecture capabilities, you may face additional challenges from a data management perspective. You may be fortunate enough to have a well planned, future-proof and data consumer-focused landscape. It may provide good transparency and purpose to your various data assets. You may have arrived at a more ‘accidental architecture’. This will not be as well structured but is still functional and serves a need. Perhaps you are undergoing a ‘Digital Transformation’ initiative and things are very much in flux in this area.

The relative complexity, transparency and stability of your data estate will have a direct influence on how you address various objectives. Data Management and Data Security and Sensitivity will require additional focus in more complex environments. These challenges are not unsurmountable with the help of data governance and data management products and platforms. You may want to implement these to a greater or lesser degree as part of the program. We’ll touch on some of these solutions later in the series.

Evolving Regulations

As mentioned, data regulations are playing catchup. As such there will be a lot of movement in this area as more authorities weigh in, flex their powers and test the mood. If you are late to the game it may take time to get up to speed. Divide the tasks and prioritise, getting external suppliers in where expert resources are not available in-house.

Next Steps

Hopefully this article has given you a reasonable idea of what lies ahead whilst providing you with some points to be aware of when starting your data governance endeavour. In the next article we’ll discuss ways of working to start achieving our objectives.

Ready to Overcome Your Data Governance Challenges?

If you’d like to talk to someone about how to move forward with an effective data governance initiative please don’t hesitate to get in touch. Our data governance service is a flexible, coworking approach that provides assistance wherever you are on your journey.

What is Data Governance?

Data governance is a discipline that exists to ensure the effective, efficient and responsible use of information to allow an organisation to achieve its goals. It is a subject that encompasses the practices, processes, standards, and metrics required in meeting this challenge. Not surprisingly, it can be quite an imposing and at times foreboding subject, conjuring up images of red tape, bureaucracy and obstacles for those who work in data. Data governance does however exist for the benefit of all. Despite common perceptions to the contrary, its real purpose is not to complicate matters. Its aims are to provide a structured framework for managing data assets across an organisation, ensuring that data is accurate, available, consistent, and secure.

At its core, data governance involves the coordination of people, processes, and technology to manage, protect and capitalise on data assets. The practice defines who can take what action, upon what data, in what situations, using what methods. It’s a critical component of an organisational data strategy, including data quality, data management and policies, as well as aspects of business process management and risk management. With all this in mind, given the importance of data to just about every organisation, data governance is not something that can really be kicked down the road for too long.

Traditional Top-Down Data Governance

Historically, data governance has been a top-down initiative. The ‘command and control’ approach and accompanying rules are often perceived by those subject to them as obstructive and arduous. For a long time, data governance was about compliance and data security only. Both these are of course incredibly important to any organisation working with data, with reputations being frequently thrown to the wall in their absence. Organisations of all sizes often make mistakes in these areas as we are all aware of with regular media coverage of breaches and abuse of rules around customer/user data. There is however much more to the remit of what we now refer to as data governance.

Historically, data governance has been a top-down initiative. The ‘command and control’ approach and accompanying rules are often perceived by those subject to them as obstructive and arduous. For a long time, data governance was about compliance and data security only. Both these are of course incredibly important to any organisation working with data, with reputations being frequently thrown to the wall in their absence. Organisations of all sizes often make mistakes in these areas as we are all aware of with regular media coverage of breaches and abuse of rules around customer/user data. There is however much more to the remit of what we now refer to as data governance.

Modern Bottom-Up Data Governance

A more recent view of data governance includes more elements that are focused on the benefits to employees who work with data. Any data consumers from business analysts and C-suite executives to data engineers and data scientists could be said to have the same fundamental needs of their data. If they are to capitalise on the potential of their data it needs to be trustworthy, adaptable, well understood and available. This is in essence what makes data valuable. If any of these are lacking, the value decreases as the consumers of that data wrestle with the issues that result.

More and more data is being consumed by businesses who also seek more agility in working with it. This moves the responsibilities and ownership to the early stages of this data’s journey within the organisation. ‘Self-service’ data analytics brings greater freedom to work with data at a departmental level. This requires a shift of responsibility regarding the ownership of that data. If data governance is to scale to satisfy the modern organisation’s appetite for data, there will be a need to extend it beyond the traditional central team of stewards and steering committees. The onus should be broadened to include data subject owners at the departmental level, or perhaps even lower. The role of central organisational elements of the program are still critical in defining company-wide requirements and standards. They are however only part of the whole program, serving a number of roles that generally do not require an intimate understanding of business data.

More and more data is being consumed by businesses who also seek more agility in working with it. This moves the responsibilities and ownership to the early stages of this data’s journey within the organisation. ‘Self-service’ data analytics brings greater freedom to work with data at a departmental level. This requires a shift of responsibility regarding the ownership of that data. If data governance is to scale to satisfy the modern organisation’s appetite for data, there will be a need to extend it beyond the traditional central team of stewards and steering committees. The onus should be broadened to include data subject owners at the departmental level, or perhaps even lower. The role of central organisational elements of the program are still critical in defining company-wide requirements and standards. They are however only part of the whole program, serving a number of roles that generally do not require an intimate understanding of business data.

Key Components of Data Governance

There are differing opinions on what really ‘defines’ a data governance program. A good starting list that covers most aspects is given below:

Data Quality

This focuses on ensuring that data is accurate, complete, and reliable. If data is to be trusted, this is a key aspect of building that trust.

Data Management

The processes and policies for handling data throughout its lifecycle. Data should be well managed, shaped to fit the consumer, and provided for consumption. Ease of use and availability are key considerations. This is traditionally where organisations will invest a large part of their resources in data engineers and systems administrators. To complement these, there needs to be the understanding of cataloguing, describing and communicating these data assets across the organisation.

Data Policies

The rules and regulations that govern data usage within an organisation. These traditional elements aren’t going away and form the bedrock on which teams can build their own initiatives. From there they can expand upon and decentralise responsibility for data ownership.

Business Process Management

Aligns data governance with business processes to ensure that data supports business objectives and capabilities. The true value in data is in providing the insight and understanding required by the business to be successful. This alignment of the ‘behaviour of data’ within a business with the various drivers of success is essential more today than ever. Careful consideration is required throughout all aspects of an organisation’s operations. All areas are fueled by the availability of the right data, from logistics to marketing and beyond. If the flow of that data is stifled, so too are the opportunities within the business.

Risk Management

Identifies and mitigates risks related to data privacy, security, and compliance. The risks associated with an organisation’s data are reduced only by having a firm understanding of this information. Where it resides, what it includes, how it is being accessed and how is should be safeguarded are all paramount. Penalties for not managing this risk are not just financial in the form of hefty fines but perhaps more damagingly reputational. Customers have suffered a slew of data breaches and misappropriation of their data over the last decade or so. Organisations need to do more to address these very real concerns.

The above components highlight key drivers behind programs on data governance. For more formal and in-depth definitions there are various frameworks available. We’ll discuss in our series post Data Governance Frameworks.

Summing Up

We are all very aware of the rapid increase in the need for data and its importance within the organisation. The availability of affordable platforms for generating value have placed data front and centre of businesses of all sizes. Given the perceived overhead of the list above it is no surprise that many organisations are late to the game. Forming a robust data governance program or discipline is an involved undertaking. However, for those that truly understand the value of their data and the responsibilities of ownership, data governance is a subject that should be embedded in all areas of the business.

During this series we’ll be taking a look at some of the challenges of data governance. We’ll also discuss strategies and approaches for overcoming what may at first appear to be a Herculean task. Once we understand the art of the possible we will then discuss how this can be employed to maximise business benefit.

Helping You Apply Effective Data Governance

If you’d like to understand more about data governance don’t hesitate to get in touch. Our data governance service is a flexible, coworking approach that provides assistance wherever you are on your journey.

Data Governance

In our latest blog series, this time on the contemporary subject of data governance, we’ll be taking a look at what it is, why it matters, and how to get moving with an organisational program.

We’ll also take a look at various frameworks and technologies that will help you understand what the undertaking involves and how to really focus on the benefits to your business.

First up, let’s take a look at what we mean by data governance.

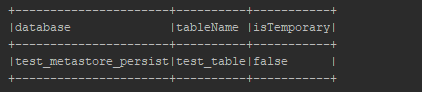

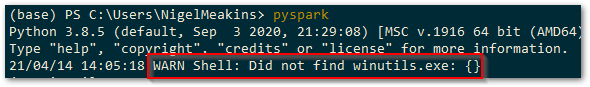

The Hive MetaStore and Local Development

In this next post in our series focussing on Databricks development, we’ll look at how to create our own Hive metastore locally using SQL Server, and wire it up for the use of our development environment. Along the way we’ll dip into a few challenges with getting this running with your own projects and how to overcome them. This should provide us with our final element of our local Spark environment for Databricks development.

The Hive Metastore

Part of the larger Apache Hive data warehouse platform, the Hive metastore is a repository for details relating to Hive databases and their objects. It is adopted by Spark as the solution for storage of metadata regarding tables, databases and their related properties. An essential element of Spark, it is worth getting to know this better so that it can be safeguarded and leveraged for development appropriately.

Hosting the Hive Metastore

The default implementation of the Hive metastore in Apache Spark uses Apache Derby for its database persistence. This is available with no configuration required but is limited to only one Spark session at any time for the purposes of metadata storage. This obviously makes it unsuitable for use in multi-user environments, such as when shared on a development team or used in Production. For these implementations Spark platform providers opt for more robust multi-user ACID-compliant relational database product for hosting the metastore. Databricks opts for Azure SQL Database or MySQL and provides this preconfigured for your workspace as part of the PaaS offering.

Hive supports hosting the metastore on Apache Derby, Microsoft SQL Server, MySQL, Oracle and PostgreSQL.

SQL Server Implementation

For our local development purposes, we’ll walk through hosting the metastore on Microsoft SQL Server Developer edition. I won’t be covering the installation of SQL Server as part of this post as we’ve got plenty to be blabbering on about without that. Please refer to the Microsoft Documentation or the multitude of articles via Google for downloading and installing the developer edition (no licence required).

Thrift Server

Hive uses a service called HiveServer for remote clients to submit requests to Hive. Using Apache Thrift protocols to handle queries using a variety of programming languages, it is generally known as the Thrift Server. We’ll need to make sure that we can connect to this in order for our metastore to function, even though we may be connecting on the same machine.

Hive Code Base within Spark

Spark includes the required Hive jars in the \jars directory of your Spark install, so you won’t need to install Hive separately. We will however need to take a look at a few of the files provided in the Hive code base to help with configuring Spark with the metastore.

Creating the Hive Metastore Database

It is worth mentioning at this point that, unlike Spark, there is no Windows version of Hive available. We could look to running via Cygwin or Windows Subsystem for Linux (WSL) but we don’t actually need to be running Hive standalone so no need. We will be creating a metastore database on a local instance of SQL Server and pointing Spark to this as our metadata repository. Spark will use its Hive jars and the configurations we provide and everything will play nicely together.

The Hive Metastore SchemaTool

Within the Hive code base there is a tool to assist with creating and updating of the Hive metastore, known as the ‘SchemaTool‘. This command line utility basically executes the required database scripts for a specified target database platform. The result is a metastore database with all the objects needed by Hive to track the necessary metadata. For our purposes of creating the metastore database we can simply take the SQL Server script and execute it against a database that we have created as our metastore. The SchemaTool application does also provide some functionality around updating of schemas between Hive versions, but we can handle that with some judicious use of the provided update scripts should the need arise at a later date.

We’ll be using the MSSQL scripts for creating the metastore database, which are available at:

https://github.com/apache/hive/tree/master/metastore/scripts/upgrade/mssql

In particular, the file hive-schema-2.3.0.mssql.sql which will create a version 2.3.0 metastore on Microsoft SQL Server.

Create the database

Okay first things first, we need a database. We also need a user with the required permissions on the database. It would also be nice to have a schema for holding all the created objects. This helps with transparency around what the objects relate to, should we decide to extend the database with other custom objects for other purposes, such as auditing or configuration (which would sit nicely in their own schemas). Right, that said, here’s a basic script that’ll set that up for us.

create database metastore; create login metastore with password = 'some-uncrackable-adamantium-password', default_database = metastore; use Hive; create user metastore for login metastore; go; create schema meta authorization metastore; go; grant connect to metastore; grant create table to metastore; grant create view to metastore; alter user metastore with default_schema = meta;

For simplicity I’ve named my database ‘Hive’. You can use whatever name you prefer, as we are able to specify the database name in the connection configuration.

Next of course we need to run the above hive schema creation script that we acquired from the Hive code base, in order to create the necessary database objects in the Hive metastore.

Ensure that you are logged in as the above metastore user so that the default schema above is applied when the objects are created. Execute the hive schema creation script.

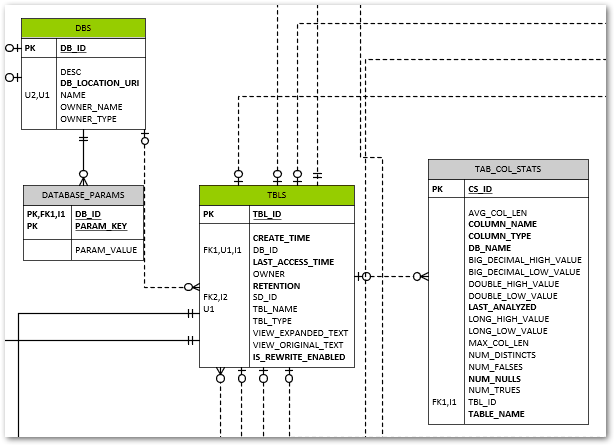

The resultant schema isn’t too crazy.

You can see some relatively obvious tables created for Spark’s metadata needs. The DBS table for example lists all our databases created, and TBLS contains, yep, you guessed it, the tables and a foreign key to their related parent database record in DBS.

The VERSION table contains a single row that tracks the Hive metastore version (not the Hive version).

Having this visibility into the metadata used by Spark is a big benefit should you be looking to drive your various Spark-related data engineering tasks from this metadata.

Connecting to the SQL Server Hive Metastore

JDBC Driver Jar for SQL Server

One file we don’t have included as standard in the Spark code base is the JDBC driver to allow us to connect to SQL Server. We can download this from the link below.

From the downloaded archive, we need a Java Runtime Engine 8 (jre8) compatible file, and I’ve chosen mssql-jdbc-9.2.1.jre8.jar as a pretty safe bet for our purposes.

Once we have this, we need to simply copy this to the \jars directory within our Spark Home directory and we’ll have the driver available to Spark.

Configuring Spark for the Hive Metastore

Great, we have our metastore database created and the necessary driver file available to Spark for connecting to the respective SQL Server RDBMS platform. Now all we need to do is tell Spark where to find it and how to connect. There are a number of approaches to providing this, which I’ll briefly outline.

hive-site.xml

This file allows the setting of various Hive configuration parameters in xml format, including those for the metastore, which are then picked up from a standard location by Spark. This is a good vehicle for keeping local development-specific configurations out of a common code base. We’ll use this for storing the connection information such as username, password, and we’ll bundle in the jdbc driver and jdbc connection URL. A template file for hive-site.xml is provided as part of the hive binary build, which you can download at https://dlcdn.apache.org/hive/. I’ve chosen apache-hive-2.3.9-bin.tar.gz.

You’ll find a hive-site.xml.template file in the \conf subdirectory which contains details of all the configurations that can be included. It may make your head spin looking through them, and we’ll only use a very small subset of these for our configuration.

Here’s what our hive-site.xml file will end up looking like. You’ll need to fill in the specifics for your configuration parameters of course.

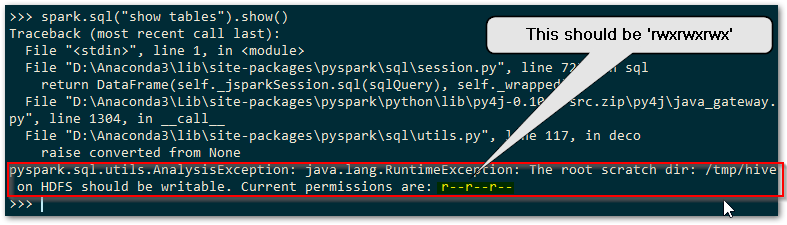

<configuration>

<property>

<name>hive.exec.scratchdir</name>

<value>some-path\scratchdir</value>

<description>Scratch space for Hive jobs</description>

</property>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>some-path\spark-warehouse</value>

<description>Spark Warehouse</description>

</property>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:sqlserver://some-server:1433;databaseName=metastore</value>

<description>JDBC connect string for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.microsoft.sqlserver.jdbc.SQLServerDriver</value>

<description>Driver class name for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>metastore</value>

<description>username to use against metastore database</description>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>some-uncrackable-adamantium-password</value>

<description>password to use against metastore database</description>

</property>

</configuration>

You’ll need to copy this file to your SPARK_HOME\conf directory for this to be picked up by Hive.

Note the use of the hive.metastore.warehouse.dir setting to define the default location for our hive metastore data storage. If we create a Spark database without specifying an explicit location our data for that database will default to this parent directory.

spark-defaults.conf

This allows for setting of various spark configuration values, each of which starts with ‘spark.’. We can set within here any of the values that we’d ordinarily pass as part of the Spark Session configuration. The format is simple name value pairs on a single line, separated by white space. We won’t be making use of this file in our approach however, instead preferring to set the properties via the Spark Session builder approach which we’ll see later. Should we want to use this file, note that any Hive-related configurations would need to be prefixed with ‘spark.sql.’.

Spark Session Configuration

The third option worth a mention is the use of the configuration of the SparkSession object within our code. This is nice and transparent for our code base, but does not always behave as we’d expect. There are a number of caveats worth noting with this approach, some of which have been garnered through painful trial and error.

SparkConf.set is for Spark settings only

Seems pretty obvious when you think about it really. You can only set properties which are prefixed spark.

‘spark.sql.’ Prefix for Hive-related Configurations

As previously mentioned, just to make things clear, if we want to add any Hive settings, we need to prefix these ‘spark.sql.’

Apply Configurations to the SparkContext and SparkSession

All our SparkConf values must be set and applied to the SparkContext object with which we create our SparkSession. The same SparkConf must be used for the Builder of the SparkSession. This is shown in the code further down when we come to how we configure things on the SparkSession.

Add Thrift Server URL for Own SparkSession

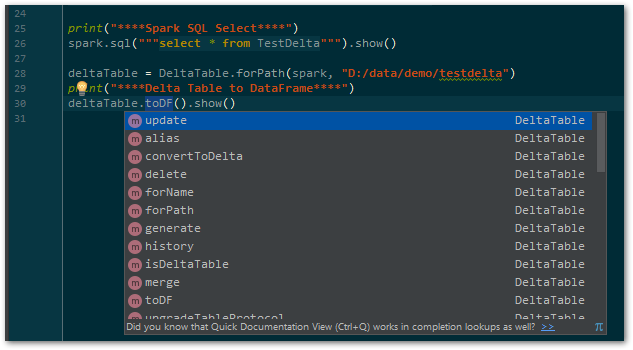

The hive thrift server URL must be specified when we’re creating our own SparkSession object. This is an important point for when we want to configure our own SparkSession such as for adding the Delta OSS extensions. If you are using a provided SparkSession, such as when running PySpark from the command line, this will have been done for you and you’ll probably be blissfully unaware of the necessity of this config value. Without it however you simply won’t get a hive metastore connection and your SparkSession will not persist any metadata between sessions.

We’ll need to add the delta extensions for the SparkSession and catalog elements in order to get Delta OSS functionality.

Building on the SparkSessionUtil class that we had back in Local Development using Databricks Clusters, adding the required configurations for our hive metastore, our local SparkSession creation looks something like

import os

from pyspark import SparkConf, SparkContext

from pyspark.sql import SparkSession

from delta import *

from pathlib import Path

DATABRICKS_SERVICE_PORT = "8787"

class SparkSessionUtil:

"""

Helper class for configuring Spark session based on the spark environment being used.

Determines whether are using local spark, databricks-connect or directly executing on a cluster and sets up config

settings for local spark as required.

"""

@staticmethod

def get_configured_spark_session(cluster_id=None):

"""

Determines the execution environment and returns a spark session configured for either local or cluster usage

accordingly

:param cluster_id: a cluster_id to connect to if using databricks-connect

:return: a configured spark session. We use the spark.sql.cerespower.session.environment custom property to store

the environment for which the session is created, being either 'databricks', 'db_connect' or 'local'

"""

# Note: We must enable Hive support on our original Spark Session for it to work with any we recreate locally

# from the same context configuration.

# if SparkSession._instantiatedSession:

# return SparkSession._instantiatedSession

if SparkSession.getActiveSession():

return SparkSession.getActiveSession()

spark = SparkSession.builder.config("spark.sql.cerespower.session.environment", "databricks").getOrCreate()

if SparkSessionUtil.is_cluster_direct_exec(spark):

# simply return the existing spark session

return spark

conf = SparkConf()

# copy all the configuration values from the current Spark Context

for (k, v) in spark.sparkContext.getConf().getAll():

conf.set(k, v)

if SparkSessionUtil.is_databricks_connect():

# set the cluster for execution as required

# Note: we are unable to check whether the cluster_id has changed as this setting is unset at this point

if cluster_id:

conf.set("spark.databricks.service.clusterId", cluster_id)

conf.set("spark.databricks.service.port", DATABRICKS_SERVICE_PORT)

# stop the spark session context in order to create a new one with the required cluster_id, else we

# will still use the current cluster_id for execution

spark.stop()

con = SparkContext(conf=conf)

sess = SparkSession(con)

return sess.builder.config("spark.sql.cerespower.session.environment", "db_connect",

conf=conf).getOrCreate()

else:

# Set up for local spark installation

# Note: metastore connection and configuration details are taken from <SPARK_HOME>\conf\hive-site.xml

conf.set("spark.sql.extensions", "io.delta.sql.DeltaSparkSessionExtension")

conf.set("spark.sql.catalog.spark_catalog", "org.apache.spark.sql.delta.catalog.DeltaCatalog")

conf.set("spark.broadcast.compress", "false")

conf.set("spark.shuffle.compress", "false")

conf.set("spark.shuffle.spill.compress", "false")

conf.set("spark.master", "local[*]")

conf.set("spark.driver.host", "localhost")

conf.set("spark.sql.debug.maxToStringFields", 1000)

conf.set("spark.sql.hive.metastore.version", "2.3.7")

conf.set("spark.sql.hive.metastore.schema.verification", "false")

conf.set("spark.sql.hive.metastore.jars", "builtin")

conf.set("spark.sql.hive.metastore.uris", "thrift://localhost:9083")

conf.set("spark.sql.catalogImplementation", "hive")

conf.set("spark.sql.cerespower.session.environment", "local")

spark.stop()

con = SparkContext(conf=conf)

sess = SparkSession(con)

builder = sess.builder.config(conf=conf)

return configure_spark_with_delta_pip(builder).getOrCreate()

@staticmethod

def is_databricks_connect():

"""

Determines whether the spark session is using databricks-connect, based on the existence of a 'databricks'

directory within the SPARK_HOME directory

:param spark: the spark session

:return: True if using databricks-connect to connect to a cluster, else False

"""

return Path(os.environ.get('SPARK_HOME'), 'databricks').exists()

@staticmethod

def is_cluster_direct_exec(spark):

"""

Determines whether executing directly on cluster, based on the existence of the clusterName configuration

setting

:param spark: the spark session

:return: True if executing directly on a cluster, else False

"""

# Note: using spark.conf.get(...) will cause the cluster to start, whereas spark.sparkContext.getConf().get does