Terraform Modules and Code Structure

Applying Structure to your Resource Definitions

In this article I’ll be going over how best to structure your Terraform resource code into modules. This draws on the practices outlined in the site https://www.terraform-best-practices.com and the accompanying GitHub at https://github.com/antonbabenko/terraform-best-practices. It is intended to act as a summary of that content together with some of my own observations and suggestions thrown in for good measure. Although not technically Azure related, it is a subject central to your best Infrastructure as Code endeavours with Terraform.

Modules

Structuring your resource code into modules makes them reusable and easily maintainable. I guess you could say it makes them, well, modular. You can find out all about modules from the Terraform docs at https://www.terraform.io/docs/modules/composition.html so I won’t go into them too much here.

Registries

Modules become particularly powerful when you start to publish them centrally. Terraform supports a number of repositories for these, such as file shares, GitHub, BitBucket and Terraform Registry. Users can then reference the repository modules for use within their own deployments.

Module Definitions

How you determine what constitutes a module is really down to you. It will depend on how your deployments are structured and how you reuse resource definitions. Terraform recommend dividing into natural groupings such as networking, databases, virtual machines, etc. However you decide to chunk up your infrastructure deployment definitions, there are some guidelines on what to include.

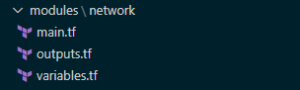

Each module is contained in its own folder and should contain a file for each of the following:

- The resource configuration definitions. This is typically named main.tf

- The outputs to be consumed outside of the module. Again, no surprises at being called outputs.tf

- The variables that are used within the module. Hardly surprisingly this is typically called variables.tf

Some teams go a little further and split up certain resource types within the module, such as security or network resources, into their own separate .tf files to be used as well as main.tf. This may make sense where the module contains a large number of resources, and managing them in a single main.tf file becomes unwieldy.

Module ‘Contracts’

In Object Oriented terms, you can loosely equate the variables to class method parameters required for the module. Similarly the Outputs are like the returns from methods and the main as the class itself. I’m sure there are plenty of purists that would point out floors in this comparison. However, conceptually it is good enough when thinking about how to encapsulate things (if you squint a bit). The variables and the outputs should form a sort of contract for use of the module. As such these definitions should try and remain relatively constant like the best library interfaces try to.

You can of course nest module folders within other module folders. However, generally speaking, it is not recommended to have very deep nested module hierarchies as this can make development difficult. Typically one level of modules, usually in a folder called ‘modules’ (again no prizes for originality here) is the accepted standard. You may of course opt for calling your folder ‘Bernard’, or ‘marzipan’ or whatever you like. Let’s face it though ‘modules’ is probably a lot more self-explanatory.

A basic module might look like the following:

Referencing Modules

With your modules nicely encapsulated for potential reuse and standards and all that loveliness, you need to make use of them. In your root module, being the top level entry point of your Terraform configuration code, you add references such as shown below:

module "sqldatabase-plan9" {

source = "./modules/sqldatabase"

resource_group_name = "${azurerm_resource_group.martians.name}"

sql_server_name = "${local.sql_server_alien_invasion}"

sql_server_version = "${var.sql_server_version}"

...

This then defines a resource using the module. Simply add your variable assignments that will be used within the module as required and you’re good to go.

Some teams like prefixes (mod-, m- etc.) on these files in order to distinguish them from resources that are standalone, single-file definitions (in turn perhaps prefixed res-, r-). I’m not a big fan of prefixing by subtypes (remember Hungarian Notation..?) as this tends to get in the way of writing code. For me, simple naming that aligns with other resource file naming makes more sense.

Variables Overload?

One area to be mindful of is to not introduce variables for every attribute of your module’s resources. If an attribute is not going to be subject to change then it won’t need a variable. Remembering the maintenance of your code is a key consideration of any good ‘Coding Citizen’. Too many variables will quickly overwhelm those not familiar.

There is of course a balance to be struck here. Too few variables and you can’t really reuse your module as it is too specific for others’ needs. It may make sense to have variations on modules that have various attributes preset for a specific workload. For example a certain Virtual Machine role type will ordinarily have a bunch of attributes that don’t differ. The standard advice of using your best judgement and a little forethought applies as with most things. Personally I’d rather work with two modules that are specific than one that is vague and requires supplying many more variables.

Summing Up

So that just about covers the main points I have to share on Terraform modules and resource code structure. I hope this has provided some insight and guidance of value based on my adventures in Terraform module land. They’re definitely worth getting familiar with early on to simplify and structure your efforts. As your organisation’s deployments grow, maturity in this area will soon pay off for all involved.

The last post in this series (I know, gutting right?) coming up soon will cover Tips and Tricks with Terraform and Azure DevOps that I’ve picked up on my travels. Thanks for reading and stay safe.

Terraform with Azure DevOps: Key Vault Secrets

Key Vault Secrets, Terraform and DevOps

This article discusses the incorporation of Key Vault Secret values in Terraform modules and how they can be used as part of a release pipeline definition on Azure DevOps.

Azure Key Vault

Secret management done right in Azure basically involves Key Vault. If you’re not familiar with this Azure offering, you can get the low-down at the following link:

https://docs.microsoft.com/en-us/azure/key-vault/

This article assumes you have followed best practice regarding securing your state file, as described in Terraform with Azure DevOps: Setup. Outputs relating to Secret values will be stored within the state file, so this is essential for maintaining confidentiality.

There are two key approaches to using Key Vault secrets within your Terraform deployments.

Data Sources for Key Vault and Secrets Data References.

This involves using Terraform to retrieve the required Key Vault. One of the advantages of this method is that it avoids the need to create variables within Azure DevOps for use within the Terraform modules. This can save a lot of ‘to-ing and fro-ing’ between Terraform modules and the DevOps portal, leaving you to work solely with Terraform for the duration. It also has the advantage of being self-contained within Terraform, allowing for easier testing and portability.

Azure Key Vault Data Source

We’ll assume you have created a Key Vault using the azurerm_key_vault resource type, added some secrets using the azurerm_key_vault_secret and set an azurerm_key_vault_access_policy for the required Users, Service Principals, Security Groups and/or Azure AD Applications.

If you don’t have the Key Vault and related Secrets available in the current Terraform modules that you are using, you will need to add a data source for these resources in order to reference these. This is typically the case if you have a previously deployed (perhaps centrally controlled) Key Vault and Secrets.

Setting up the Key Vault data source in the same Azure AD tenant is simply a matter of supplying the Key Vault name and Resource Group. Once this is done you can access various outputs such as Vault URI although in practice you’ll only really need the id attribute to refer to in Secret data sources.

data "azurerm_key_vault" "otherworld-visitors" {

name = "ET-and-friends"

resource_group_name = "central-rg-01"

}

output "vault_uri" {

value = data.azurerm_key_vault.central.vault_uri

}

I’ll leave you to browse the official definition for the azurerm_key_vault data source for further information on outputs.

Azure Key Vault Secrets Data Source

Create Key Vault Secret data sources for each of the secrets you require.

data "azurerm_key_vault_secret" "ufo-admin-login-password" {

name = "area-51-admin-password"

key_vault_id = data.azurerm_key_vault.otherworld-visitors.id

}

output "secret_value" {

value = data.azurerm_key_vault_secret.ufo-admin-login-password.value

}

There are again a number of outputs for the data source, including the Secret value, version and id attributes.

You can then reference the Secret’s value by using the respective Key Vault Secret data source value attribute wherever your module attributes require it.

resource "azurerm_sql_database" "area-51-db" {

name = "LittleGreenPeople"

administrator_login_password = "${data.azurerm_key_vault_secret.ufo-admin-login-password.value}"

....

}

If you are using a centralised variables file within each module, which aligns with recommended best practice, this means only having to change the one file when introducing new secrets. Our variables file simply references the required Key Vault Secret data sources as below,

ufo_admin_login_password = "${data.azurerm_key_vault_secret.ufo-admin-login-password.value}"

and our module resource includes the variable reference.

resource "azurerm_sql_database" "area-51-db" {

name = "LittleGreenPeople"

administrator_login_password = "${var.ufo_admin_login_password}"

....

}

As previously mentioned this has not involved any Azure DevOps elements and the Terraform won’t require additional input variables in order to work with the Key Vault Secrets.

Retrieval of Key Vault Secret Values into DevOps Variables

The second approach uses a combination of DevOps variable groups and Terraform functionality to achieve the same end result.

DevOps Key Vault Variable Group

The first step is to grab our secrets into DevOps variables for use within the pipeline. Variable groups can be linked to a Key Vault as below.

This then allows the mapping of Secrets to DevOps variables for use within the various tasks of our pipelines.

I’ll demonstrate two ways to work with these variables within our Terraform modules. I’m sure there are others of course, but these are ones that I’ve found simplest for DevOps – Terraform integration.

Replacement of Tokenised Placeholders

The Replace Tokens task can be used to to replace delimited placeholders with secret values stored in variables. This does of course require that you adopt a standard for your placeholders that can be used across your modules. This approach can result in code that is disjointed to read, but is a common practice with artifacts such as app.config files in the DotNet world. The advantage of this is that you can take a single approach to Secret substitution. We can use Token replacement for both of these areas your code, be it Terraform IaC or DotNet.

Use of ‘TF_VAR_’ Variables

The other technique I mention here is the use of the inbuilt support for variables with names that are prefixed ‘TF_VAR_’. Any environment variables with this naming convention will be mapped by design to Terraform variables within your modules. More information from Terraform docs is available at https://www.terraform.io/docs/commands/environment-variables.html.

We can pass DevOps variables that have been populated with Secrets values into the Terraform task as Environment Variables. You can then use standard variable substitution within your modules. So, ‘TF_VAR_my_secret’ will substitute for the ‘my_secret’ Terraform variable. Please note that all DevOps variables containing secret values should be marked as sensitive. This then obfuscates the variable values within the DevOps log.

Summing Up

Terraform and Azure DevOps allow more than one method for building pipelines that require secrets stored within Key Vault. For me, the Terraform ‘native’ approach of using Key Vault and Key Vault secrets data sources via the Azure RM Terraform provider is the simplest approach. There is no overhead of managing DevOps variables involved which keeps things nicely contained. You may of course prefer alternatives such as those others shown above or have another method, which I’d love to hear about.

I hope this post has provided some insight into using Terraform within Azure DevOps. These two technologies are a winning combination in address real-world Infrastructure as Code adoption within your organisation.

In the final post of this series I’ll be looking at best practices for managing your code using Terraform Modules.