Dependencies in Terraform and ARM Templates

Dependency Management

In order to determine the respective order of resource deployments and how it all fits together, we need to understand the dependencies that exist within our resources. This is a particular area of potential maintenance and complexity for our Infrastructure as Code (IaC) best efforts as we amend our deployment requirements over time. In this post we’ll look at how ARM templates and Terraform deal with the gnarly aspect of dependencies within resource deployments.

ARM Templates

Implicit Dependencies

References to Other Resource Properties

The ‘reference’ function can be used to reference properties from one resource for use within another. For example

"properties": {

"originHostHeader": "[reference(variables('webAppName')).hostNames[0]]",

...

}

The referenced resource must be a name of a resource defined within the same template. This creates an implicit dependency on the other resource. Passing Resource ID values in will not create an implicit dependency as ARM will not be able to interpret this.

List* Functions

The use of these within you template, as described at https://docs.microsoft.com/en-us/azure/azure-resource-manager/templates/template-functions-resource#list will also create an implicit dependency.

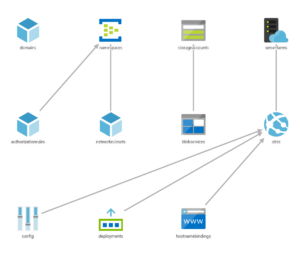

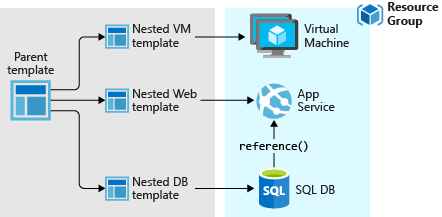

Linked/Nested Templates

When calling a linked/nested template, ARM will create an implicit dependency on the deployment that is defined within the linked template, essentially conducting the linked deployment first. This is an ‘all or nothing’ dependency, with no ability to define more granular resource dependencies across templates.

Explicit Dependencies using ‘dependsOn’

For all those resources that are defined within the same template that do not use ‘reference’ or ‘List*’ functions, ARM defines dependencies between resources explicitly using the ‘dependsOn’ attribute. An example of the use of the ‘dependsOn’ attribute is given below.

{

"type": "Microsoft.Compute/virtualMachineScaleSets",

"name": "[variables('namingInfix')]",

"location": "[variables('location')]",

"apiVersion": "2016-03-30",

"tags": {

"displayName": "VMScaleSet"

},

"dependsOn": [

"[variables('loadBalancerName')]",

"[variables('virtualNetworkName')]",

"storageLoop",

],

It is not possible to define dependencies across different templates in this manner. Managing these dependencies within each template is an element of the deployment definition that will need attention and can easily result in failed deployments when items are missed. This can add a considerable overhead to our efforts to get things managed via IaC for those deployments that have many resources.

Terraform

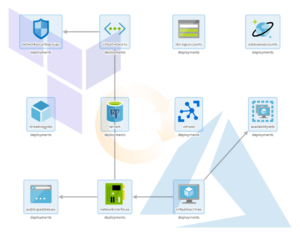

Implicit Dependencies

For all resources defined within the Terraform modules, both root modules and any referenced, Terraform will determine the dependencies and execute the resource management actions as needed. There is no need for explicitly declaring dependencies between resources, and as items are added/removed, so dependencies are adjusted as needed. When one resource depends on another it will be apparent from any referenced resource ids. This is essentially the same behaviour as for the ARM template ‘reference’ function, with the main advantage of Terraform being that these referenced resources can exist within separate modules/files.

Explicit Dependency Definition

One area where dependencies will need to be explicitly defined is where Terraform resource providers cannot be used for all resource aspects of the deployment required. Two common instances of this within Azure are:

- Execution of ARM Templates from Terraform using the ‘azurerm_template_deployment’ provider

- Ad hoc script execution (Bash, PowerShell, Cmd, Python etc.)

Terraform will be unaware of the resources actually deployed within an ARM template and as such will not be able to determine which other resources may depend on these. Likewise, actions undertaken within scripts and other executables will also present no known resource dependencies for Terraform to consider. In both these scenarios we need to state what our dependencies will be.

Explicit Dependencies using ‘depends_on’

Terraform allows the explicit defining of dependencies using the ‘depends_on’ attribute for a resource. In the example below, the dependency resources must all be deployed prior to this resource.

resource "null_resource" "exec_notebook_job_mount_workspace_storage_checkpointing" {

depends_on = {

null_resource.create_databricks_data_eng_cluster,

null_resource.add_workspace_notebook_storage_mount,

azurerm_storage_container.datalake

}

Null Resource and Triggers

Terraform provides a ‘Null Resource’ for arbitrary actions, being basically a resource definition for a non-existent resource. The ‘local-exec’ provisioner within this allows for the execution of various script types such as PowerShell, Bash or Cmd. ‘Triggers’ control the point at which this can be executed that define one or more prerequisite resources which must undergo change in order for the execution to take place. Using this approach we can execute any script or executable at a set point within the deployment. Note that these triggers act as logical ‘Or’ rather than ‘And’ triggers, so the resolution of any one of their resource conditions will allow the execution of the null resource.

resource "null_resource" "exec_notebook_job_mount_workspace_storage_checkpointing" {

depends_on = {

null_resource.create_databricks_data_eng_cluster,

null_resource.add_workspace_notebook_storage_mount,

azurerm_storage_container.datalake

}

triggers = {

create_databricks_data_eng_cluster = "${null_resource.create_databricks_data_eng_cluster.id}_${uuid()}"

add_workspace_notebook_workspace_storage = "${null_resource.add_workspace_notebook_storage_mount.id}_${uuid()}"

datalake_storage = "${azurerm_storage_container.datalake.id}_${uuid()}"

}

provisioner "local-exec" {

Note that there are multiple triggers that could set this resource to execute the respective script. All three of the related resources must however be deployed prior to executing the script, due to internal dependencies that the script has on these resources. As such we have used the depends_on block to ensure that all is in place beforehand.

Summing Up

Terraform makes dependencies a lot simpler to define than ARM templates. The combination of implicit dependency detection plus explicit definition where required allows for much easier definition of resource deployments. Being able to implicitly determine dependencies across files with Terraform also provides for less ‘structural constraints’ when writing the IaC definitions. This results in increased productivity and reduced management overhead. Administrators need think less about the order of deployment and can get on with defining the infrastructure, leaving the rest for Terraform.

Modularisation of Terraform and ARM Templates

Modularised Deployment Definition

When it comes to code, everyone loves reuse. DRY is a great principle to follow for any elements of your work and IaC is no exception. Having a modularised deployment definition for resources that is centrally managed, parameterised to allow amending for different deployment scenarios/environments provides massive benefits. So let’s take a quick look at how we achieve modularisation of ARM Templates and Terraform.

ARM Templates

Linked/Nested Templates

ARM allows the calling of templates within templates, using links to other templates within a parent template. This means we can use a modularised deployment definition by separating out across multiple files for ease of maintenance and reuse. There is one rather annoying requirement for this however, and this is that the linked file needs to be accessible from Azure’s deployment executor at the time of deployment. This means storing the templates in a location accessible from within Azure, such as a blob storage account. If we are to use linked templates, we then need to manage the deployment of the files to this location as a prerequisite to the deployment execution. We also can’t ‘peak’ into these files to validate any content or leverage any intellisense from within tools.

Close Coupled Parameter Files

The close coupling of ARM Template parameters with parameter files that we mentioned in the post Deployments with Terraform and ARM Templates does provide some challenges when it comes to centralising definitions across a complete deployment. As previously mentioned, we can include only those parameters within the file that are expected by the respective ARM template. This prevents us from using a single file for all parameters should we desire to do so.

Template Outputs

ARM does allow us to define outputs from linked/nested templates that can be passed back to parent templates. This is useful for items such as Resource IDs and other deployment time values.

Terraform

Terraform Modules

Terraform .tf files can be directly referenced from other modules. You can defined one or more .tf files within a directory, with an associated variable definition file if desired. A simple example of referencing one module from another is shown below.

module "network" {

source = "./modules/vpn-network"

address_space = "10.0.0.0/8"

}

Here we are setting an argument for the referenced resource (an ‘azurerm_virtual_network’) address_space as required from within the referencing module. We can also pass in the values for any predefined variables using the same syntax as we have done above for the resource argument, using the variable name rather than the name of a resource argument.

The module references can done using relative local paths, git/GitHub repositories, centralised Terraform registries (public or private), HTTP urls and Azure storage/Amazon S3/GCS Buckets. This offers pretty much any solution to centralised storage that administrators might want to leverage. It also provides a more flexible modularised approach to deployment definition than that offered by ARM templates. Any required modules are acquired as required by the Terraform executor machine within the initialisation stage using the ‘Terraform Init’ action.

The management of these modules is no more complex than managing any code artifacts within your organisation. No concerns over missing redeployment of linked files, and a great selection of options for centralised management. For me this is a big benefit for deployments that involve anything beyond the most basic of resources.

Deployment-Wide Variable Files

Terraform allows variable files to contain all variables required by the deployment with no issues for redundant entries. Variables can be passed in to other Terraform modules as required allowing you to practice concepts such as ‘dependency inversion’, whereby the called module accepts input from the calling module and has no care for what the calling module passes.

Module Outputs

Each Terraform module can also supply outputs, thereby allowing passing back of resultant items such as Resource IDs and the like (as with ARM templates), for use in the calling module.

Conclusion

The modularisation of Terraform and ARM Templates use different approaches that provide varying levels of reuse and centralised management. The linked/nested templates provided by ARM Templates lack the flexibility of referencing and storing that are provided by Terraform. The additional steps required to ensure that the linked files are in place when needed does present a source of potential deployment failure. Terraform’s ability to retrieve modules from pretty much any repository type offers considerable advantages. When coupled with the less restrictive use of variables compared to ARM parameters, Terraform comes out clearly on top in this area.

You can find a great guide on IaC best practices using Terraform and Azure from Julien Corioland of Microsoft at https://github.com/jcorioland/terraform-azure-reference.

Deployments with Terraform and ARM Templates

Introduction

For those organisations hosting their IT resources on Azure, the most popular solutions for cloud resource deployments are Azure Resource Manager(ARM) Templates and Terraform.

Azure’s go to market offering for automation of cloud resource management is provided through ARM Templates. These are a mature offering with the capability to generate templates for previously deployed resources and a library of ready-rolled templates from Azure community contributors. For adopting an Infrastructure as Code (IaC) paradigm for DevOps this is the solution considered first by most Azure-hosted organisations.

The first question many ask when considering an alternative to Microsoft’s ARM template approach to deployment is quite simply “Why bother”? We already have a pretty good approach to managing our infrastructure deployments within Azure, which can be parameterised, version controlled and automated for the vast majority of situations that we will need.

In this first post on the subject of using Terraform on Microsoft Azure, we will take a look at some of the differences between how deployments are defined within Terraform and ARM templates.

Deployment Definition

When defining our deployments, the actual scope of what is included using Terraform or ARM templates is determined using two quite different approaches.

ARM Templates

Azure uses ARM templates to define a unit of deployment. Each template is a self contained deployment, and is executed using either PowerShell or Azure CLI as below:

$templateFile = "{provide-the-path-to-the-template-file}"

$parameterFile="{path-to-azuredeploy.parameters.dev.json}"

New-AzResourceGroup `

-Name myResourceGroupDev `

-Location "East US"

New-AzResourceGroupDeployment `

-Name devenvironment `

-ResourceGroupName myResourceGroupDev `

-TemplateFile $templateFile `

-TemplateParameterFile $parameterFile

templateFile="{provide-the-path-to-the-template-file}"

az group create \

--name myResourceGroupDev \

--location "East US"

az group deployment create \

--name devenvironment \

--resource-group myResourceGroupDev \

--template-file $templateFile \

--parameters azuredeploy.parameters.dev.json

You’ll notice that we have specified a Resource Group for the target of the deployment. We have also specified a parameter file for the deployment, which we’ll touch upon later. All very straight forward really, though this does present some challenges as mentioned below.

Target Resource Group

Each deployment targets a pre-existing Resource Group, so we will need to execute a PowerShell/Azure CLI script to create this prior to executing the ARM template if we are using a new Resource Group. Not exactly a deal breaker, this does require ‘breaking out’ of ARM in order to satisfy this prerequisite.

Deployment Specification

ARM templates use JSON to define the deployment resources and parameters. These can be written using any JSON-firendly editor of course. VSCode has a nice extension from Microsoft that aids with syntax linting, IntelliSense, snippets etc. – just search in the VSCode marketplace for “ARM” from Microsoft. Here’s a quick example of a basic ARM Template showing how we can parameterise our deployment.

{

"$schema": "https://schema.management.azure.com/schemas/2015-01-01/deploymentTemplate.json#",

"contentVersion": "1.0.0.0",

"parameters": {

"storageName": {

"type": "string",

"minLength": 3,

"maxLength": 24

}

},

"resources": [

{

"type": "Microsoft.Storage/storageAccounts",

"apiVersion": "2019-04-01",

"name": "[parameters('storageName')]",

"location": "eastus",

"sku": {

"name": "Standard_LRS"

},

"kind": "StorageV2",

"properties": {

"supportsHttpsTrafficOnly": true

}

}

]

}

And here is the accompanying parameter file.

{

"$schema": "https://schema.management.azure.com/schemas/2015-01-01/deploymentParameters.json#",

"contentVersion": "1.0.0.0",

"parameters": {

"storageName": {

"value": "myuniquesaname"

}

}

}

Parameter File Reuse Complexities

Each parameter file is best used with respect to a single ARM template. This is because if the parameter file contains parameters that are not defined within the template, this results in an error. This close coupling of parameter file and parameter definition within the ARM template makes reuse of parameter files across templates very difficult. There are basically two approaches to handling this, neither of which are pretty.

- Repeat the common parameters in each parameter file and use multiple parameter files. Keeping these in sync presents something of a risk to deployments.

- Repeat the parameter definition for unneeded parameters in each ARM template, allowing a single parameter file to be used across templates. This could soon result in templates with more parameters that are redundant than are actually being used.

Auto-Generation of ARM Templates from Azure

The ability to generate ARM templates from existing resources within Azure from the portal is a considerable benefit when bringing items into the IaC code base. This can save a lot of time with writing templates.

Terraform Modules

Terraform defines deployment resources within one or more files, with the ‘.tf’ extension. The language used is ‘HashiCorp Configuration Language’ or ‘HCL’. This is similar in appearance to JSON, with maybe some YAML-like traits sprinkled in I guess. I personally find it slightly easier to work with than JSON. Here’s a basic module.

# Configure the Azure Provider

provider "azurerm" {

version = "=1.38.0"

}

variable "address_space" {

type = string

}

# Create a resource group

resource "azurerm_resource_group" "example" {

name = "production"

location = "West US"

}

# Create a virtual network within the resource group

resource "azurerm_virtual_network" "example" {

name = "production-network"

resource_group_name = "${azurerm_resource_group.example.name}"

location = "${azurerm_resource_group.example.location}"

address_space = [${var.address_space}]

}

Root Module

When executing a Terraform action, such as Deploy, Plan or Validate, it recognises all the .tf files within the current folder as forming what is termed the ‘root module’. These files can themselves reference other modules as defined in other .tf files.

Terraform can create resource groups as part of the deployment, so there is no need create these beforehand. Although this may seem to be a small point, it does allow for a more complete specification and unified approach to encapsulating the whole deployment.

Variable Files

Terraform uses variable for the parameterisation of deployments. These can be declared within any .tf file, but by convention are generally defined in a single file called ‘variables.tf’ for ease of maintenance. This file is usually located in the ‘root module’ directory. The values for the variables can either be set as defaults within the variable declaration file or passed using a file with the extension ‘.tfvars’. For the root module Terraform will automatically use any file called ‘terraform.tfvars’ or ‘auto.tfvars’ if available without the need to specify this. Variables can be used across multiple deployments, with no issues with coupling of variable definitions in two places that we have with ARM templates and their respective parameter files. Variable files and individual variables can also be overridden at the command line when a Terraform action such as Plan, Deploy or Validate is executed, allowing a further level of control.

Auto-Generation from Azure

Terraform does not provide any way of generating module files from pre-existing resources within Azure. There are some feature requests to extend the ‘Import’ functionality, which would allow for specific resources to be imported into module .tf configuration files, which would make a difference to productivity for migrating existing deployments into the IaC code base.

In Summary

Although having essentially the same goal, deployments with Terraform and ARM templates go about things in slightly different ways. These differences may not appear to be of much consequence, but as you’ll see later on in this series, these differences in how the code is structured and resources are managed results in some strengths and weaknesses between the two. We’ve only touched on some of the deployment definition basics here. We’ll go on in the next posts to discuss modularisation of our IaC code and resource dependency management, before diving into how we integrate our Terraform efforts with Azure DevOps for an approach to deployments of infrastructure that is as controlled and well managed as is that of our application code. I hope this has served as a good overview and introduction for those working on Azure, and that the rest of the series will provide some valuable insight into using Terraform for your DevOps needs.

Terraform on Azure

Welcome to our series of posts on Terraform and how it is leveraged within Azure to control your infrastructure.

We’ll be looking at how it compares with the native Azure ARM technology, how to structure your resource definitions, and various technical aspects to help you embrace Terraform as a broad and deep resource management tool.

So over to our first article, where we discuss the nature of the deployments on Terraform and how they differ to those using Azure ARM templates.